Great return on investment with ML models predictions, include huge dataprep-dataproc on SQL-NoSQL data classification, examples: ✓cluster, ✓recommendation systems, ✓Net Promoter Score (NPS), ✓Chrun, ✓Pricing, ✓Competence analysis, ✓Sales Geolocation, ✓Predicting whether a customer will churn or not, ✓Classifying email messages as spam or not spam, ✓Detecting fraudulent credit card transactions, ✓Classifying images of different types of flowers, ✓Categorizing news articles into different topics, ✓Identifying the sentiment (positive, negative, or neutral) of customer reviews, ✓Determining the risk of loan default, ✓Predicting whether a patient will respond well to a certain treatment, ✓Forecasting sales for a retail business, ✓identifying the most important features for predicting customer churn, ✓recognizing handwritten digits, ✓generating human-like text based on input, ✓Segmenting customers into different groups based on their buying behavior, ✓Grouping similar documents or articles together, ✓Identifying customer segments with similar preferences, ✓Organizing a large collection of images into meaningful groups, ✓Training a robot to navigate through a maze efficiently.

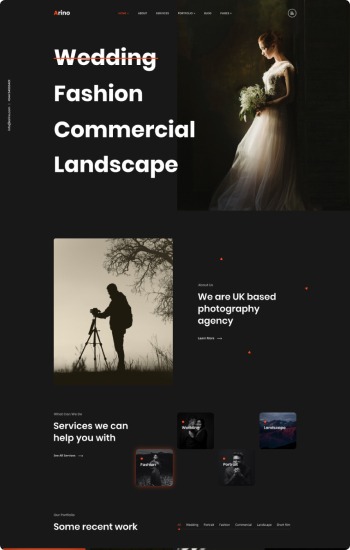

Clothes Try-On

Convolutional Networks

Clothes Try-On

Convolutional Networks

CNN GANS

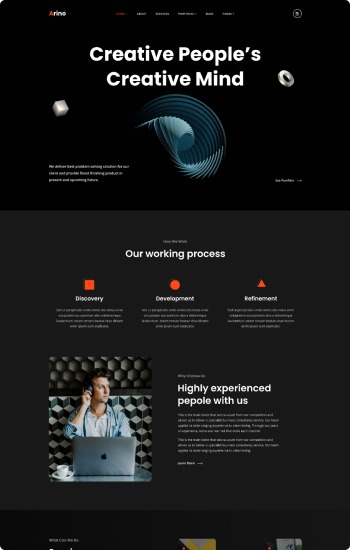

BRAND & PRODUCT 3D RENDER

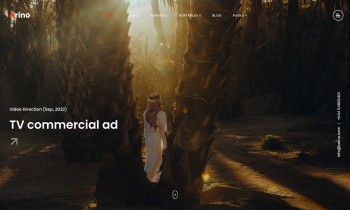

Text to Video.

CNN GANS

BRAND & PRODUCT 3D RENDER

Text to Video.

LLM'S NPL-TRY

Fine-tunning Transformers

LLM'S NPL-TRY

Fine-tunning Transformers

Elastic Net Lasso

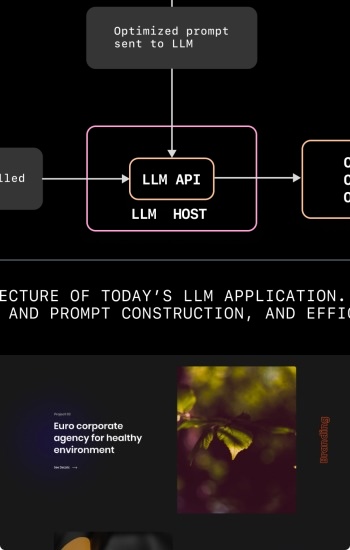

INFERENCE LLMS

Elastic Net Lasso

INFERENCE LLMS

LSTM

difussion transformers

LSTM

difussion transformers

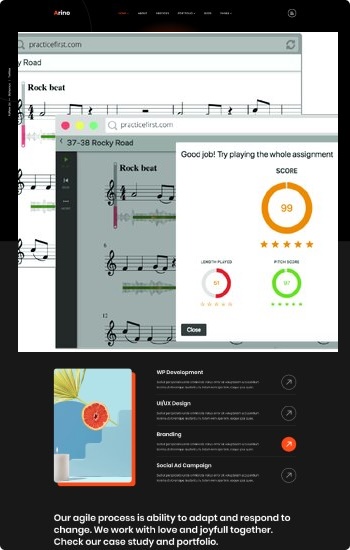

Caption music tracks

Text to music.

Caption music tracks

Text to music.